What Are Managed Agentic Security Services (MASS), and How Do They Differ From MDR?

.avif)

.avif)

You signed with an MDR provider expecting your team to stop drowning. Instead, you got a flood of escalations, a black box you can't audit, and junior staff on the night shift who know less about your environment than your newest hire.

The coverage was supposed to free your team for strategic work. Instead, they spend half their day re-triaging what the MDR already triaged.

This is an architecture problem. Legacy MDR goes back roughly a decade, when dedicated providers carved out a niche by saying, "we are experts in SOC investigations." They were better than MSSPs, which were generalists doing 50 different services with no specialization.

But the model still relied on junior staff running deterministic SOAR workflows. Pre-coded investigation paths that break the moment an attack doesn't follow the script.

AI has changed this model. It supports non-deterministic reasoning, investigates at a greater scale, and adapts during an investigation rather than selecting from templates. But bolting AI onto the same legacy architecture doesn't solve the structural problem.

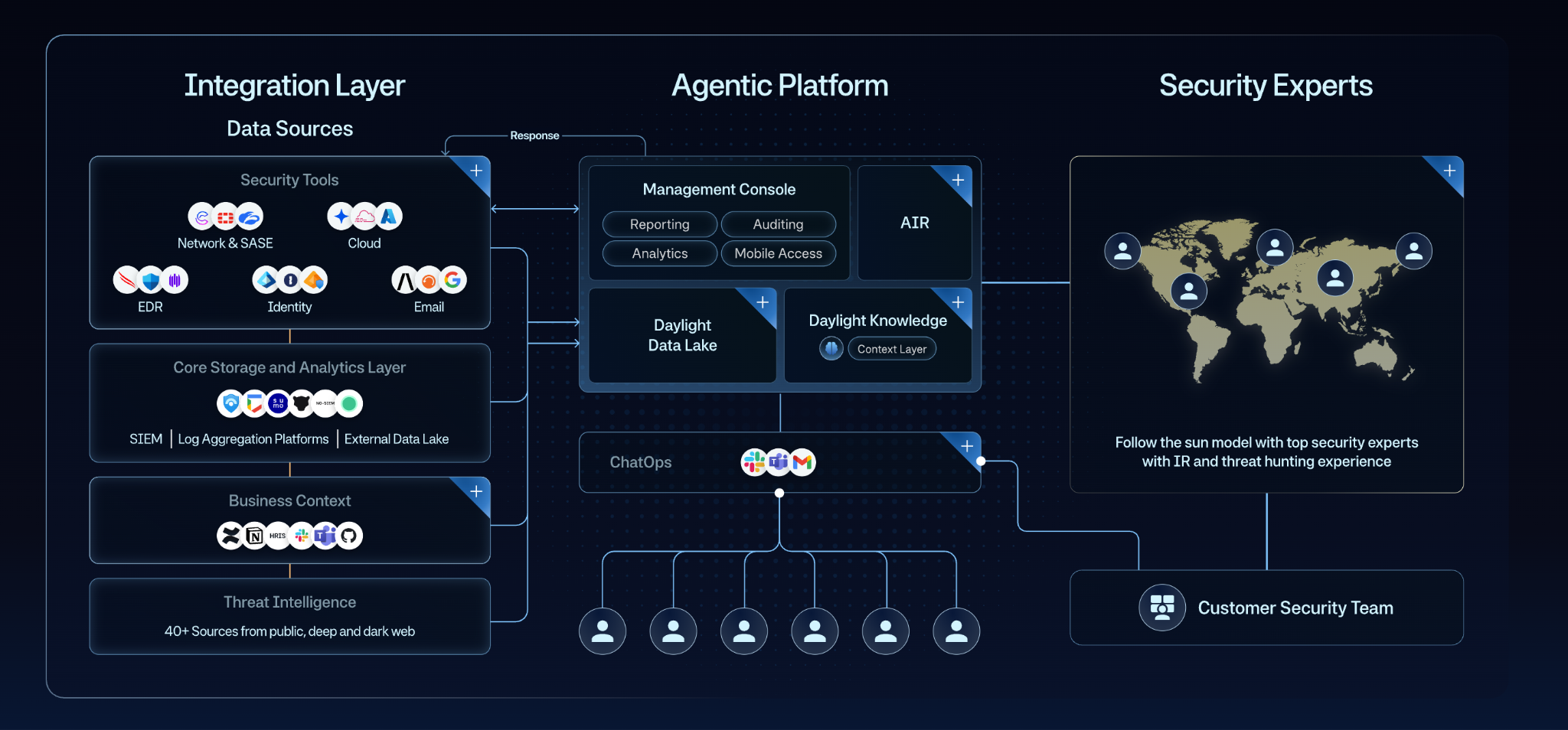

The architecture itself needs to change. That's the starting point for Managed Agentic Security Services (MASS): a new architecture for delivering modern security services. MASS combines three layers: a deep integration layer, an agentic platform, and security experts.

TL;DR:

- MASS is a new architecture for delivering modern security services. It offers a combination of an agentic platform and security experts to deliver services like MDR, threat hunting, managed phishing, managed DLP, and more.

- The bottleneck to automating security operations is context, not raw model capability. Dumping raw data on an LLM can cause hallucinations; curating telemetry, organizational, and historical context is what makes AI verdicts trustworthy.

- MASS is not a list of services. It is an architecture that enables multiple security services to run on a shared foundation of integrations, agentic investigation, and expert-led context. Where legacy MDR providers were narrow specialists, MASS delivers broad coverage with consistent investigation quality across every service.

- Security experts in MASS are system builders, not AI babysitters. Their primary role is building and scaling the context that makes AI accurate, not reviewing every AI output to catch mistakes.

What Is Managed Agentic Security Services (MASS)?

MASS is a managed security services model that uses an agentic platform and security experts to deliver multiple security operations services through an AI-native foundation.

Current MASS services include MDR, threat hunting, managed phishing, managed DLP, and more. All running on the same foundation of deep integrations, an agentic platform, and security experts with incident response and threat hunting backgrounds.

Daylight’s agentic platform consists of several core components. The Daylight data lake serves as the telemetry context layer, ingesting and structuring data from across the environment. Daylight Knowledge is the business context layer, built jointly by the customer and Daylight’s security experts, capturing organizational and historical context that does not exist in raw telemetry.

AIR (Agentic Investigation and Response) is the investigation engine that operates on top of these layers. It pulls the relevant context from both the data lake and Daylight Knowledge to investigate alerts and reach a verdict. AIR itself is built on an AI-infused orchestration system coordinating multiple specialized AI agents. Each agent is designed for a specific task, allowing for higher accuracy than a general-purpose approach.

The orchestration layer determines the next step based on the outcome of the previous one, synchronizing across agents and incorporating human input when needed. This enables investigations to adapt dynamically to the evidence rather than follow predefined playbooks.

This is architecturally distinct from both “AI SOAR workflows” and AI capabilities bolted on to legacy platforms. The entire platform was built in order to support AI execution and to support a team of security experts.

Why Context Is the Bottleneck (Not AI Capability)

The bottleneck to automating security operations is not AI capability. It is context architecture: the ability to systematically build, maintain, and make context accessible to AI at scale. SANS 2023 SOC survey data identified lack of context as the number one SOC challenge that year, displacing alert fatigue from prior years, a signal that the industry's core problem had already shifted before AI entered the picture.

A MASS architecture addresses this through three distinct context types:

1. Telemetry Context

Machine-readable data from your environment, including identity data, endpoint activity, cloud logs, and threat intelligence. It includes all alerts and logs. It is structured and abundant, but easily becomes noise. A single alert can include over a hundred fields. The instinct is to feed everything into the model, but more data doesn’t mean better decisions.

2. Organizational Context

Organizational context is the human layer: policies, approved exceptions, and the rules, both formal and informal, that define what normal looks like in your environment. It is not generated by systems, and it often lives in documentation, Slack threads, and the experience of the team.

This context is inconsistent, evolving, and sometimes contradictory. It cannot be reliably inferred from raw documentation or conversations. It must be deliberately extracted and structured before it can be used in investigations. This is where our security experts shine.

3. Historical Context

Collective memory from past investigations. In most SOCs, tickets say "Closed, benign" without capturing why or what actions were taken. Every departure, every vacation, every shift change resets institutional knowledge. MASS captures investigation reasoning, so it compounds over time rather than walking out the door when someone leaves.

The Three-Part Architecture Behind MASS

MASS operates through three layers working together. Each is essential. None is sufficient on its own.

1. Integration Layer

This layer connects to security tools, identity providers, HR systems, IT asset management, and collaboration platforms. It pulls data into a dedicated data lake per customer, tags it for relevance, and makes it accessible to AI agents with precision.

Daylight's implementation has key distinctions here: deep, bi-directional API-based integrations across source tools.

Bi-directional means the platform reads alerts from customer tools and writes resolution data back to close them at source. The result is zero alert backlog in customer dashboards, as Daylight investigates every single alert and notify on the verdict back to the origin tool.

New integrations are built in days, not months, because the integration framework itself is AI-assisted. When a customer adopts a new security tool, coverage follows quickly rather than waiting for a contract negotiation and an 8-month development cycle.

2. Agentic Platform

The agentic platform is built of multiple components working together. AIR (the AI investigation engine) is built from an AI-infused orchestration system that controls multiple specialized AI agents. AIR constructs response logic from evidence by querying business context, invoking specialized agents, and adapting based on findings.

3. Security Experts

In Daylight's model, security experts have 10+ years of incident response and threat hunting experience. They operate in a follow-the-sun model, not legacy shift-based coverage. This means that they’re distributed across the globe, working standard hours in their regions from Singapore to California.

Their role is fundamentally different from legacy MDR teams: they build context that makes AI accurate, build integration, improve coverage and take over where there’s a real incident.

How MASS Compares Across the Market

The managed security market breaks into four models: MASS, AI MDR services, AI SOC platforms, and traditional/legacy MDRs.

The MASS row reflects a different operating model, one where investigation, context building, and response run on a single architecture rather than stitched-together point solutions.

The Role of Security Experts in MASS

In MASS, security experts have a fundamentally different role with four distinct responsibilities.

All personalization lives in the system rather than with individual experts. Any expert can pick up an investigation with full relevant context already available, because context building and refinement are continuous.

1. Context Building and Scaling (Primary Role)

During onboarding, experts work directly with customer teams to extract undocumented business knowledge: policies, exceptions, and how the business actually operates. They tag data, add knowledge items. This continues post-onboarding as the business evolves, but at a lower volume.

This is the key distinction from competitors whose analysts merely validate AI outputs. Daylight's experts build the context that makes the AI accurate in the first place.

2. Review Edge Cases & Low Confidence Investigations

Most investigations are handled end-to-end by the system. In more complex or ambiguous cases, where human judgment is required, a security expert reviews the investigation. The role of the expert is not just to close the case, but to ensure the investigation is complete and grounded in the right context.

3. Incident Response Leadership

When a real threat is confirmed, Daylight's security experts lead resolution. Their backgrounds in IR at Fortune 500 and government agencies mean they bring context most internal security teams can't replicate.

4. Brainstorming

Experts work alongside customer teams to improve detection rules, surface findings, and raise the overall security bar. In Daylight's Glass Box model, customers can inspect what data was used, what conclusion was reached, and why.

This is the most appreciated element by our customers. Teams finally feel like they have a partner who shows them what ‘great’ looks like, rather than a black box vendor protecting their switching costs.

How to Evaluate a MASS Provider

MASS is an emerging category, so evaluation should focus on architecture and experience rather than analyst quadrant placement.

Use these questions to pressure-test a provider:

- If your environment is primarily cloud with modern identity, prioritize deep integration coverage across identity, cloud, and SaaS platforms, not just endpoints.

- If you are replacing a legacy MDR and have experienced the black box problem, require Glass Box transparency as non-negotiable. Ask to see how the system arrived at a specific verdict, what the level of explainability is on the decisions the AI makes, and the level of visibility in terms of coverage and performance.

- If your team is drowning in escalations, and you want to leverage AI SOC investigations - evaluate context architecture. All three context types (telemetry, organizational, and historical) need to be addressed, not just raw telemetry ingestion. This is what separates surface-level AI investigation from genuine AI-native investigation.

- If your alert backlogs are overflowing, ensure every alert is investigated and then closed at the source tool.

- If you need coverage beyond MDR, evaluate whether additional services run on the same architecture or are bolted-on acquisitions with separate workflows.

- If you are considering an AI SOC tool instead, be clear about the tradeoff: these tools require skilled operators 24/7, take zero liability, and cannot handle user-reported phishing. Most importantly, it means your team will be tasked with building the infrastructure for AI in terms of context building.

- If you cannot validate claims through a POC with realistic incidents, treat the evaluation with caution. Architecture decks do not substitute for seeing the investigation quality firsthand. Daylight runs a 3 week POC with every prospect and we are able to show real value in a matter of days. A capable MDR should be able to demonstrate value before you buy.

What Agentic Investigation Changes

The shift from legacy MDR to MASS is not about adding AI to the same model. The model itself was built for a different era. AI-native architecture, combined with the right human expertise, enables what the market has needed for years: a single provider delivering deep, specialized security services across the full SecOps surface with accountability, transparency, and investigation quality that compounds over time.

Daylight Security built its platform from day one as a managed service with security experts embedded by design. The same architecture powers MDR, threat hunting, agentic data lake, managed phishing, and DLP and more to come.

For a deeper look at how agentic investigation works in practice, explore the Daylight blog.

Frequently Asked Questions About Managed Agentic Security Services

How Does MASS Differ from AI MDR?

AI MDR is a service model. MASS is an architecture. AI MDR providers, including Daylight, deliver managed detection and response using AI-native investigation. MASS describes the broader framework that extends the same architecture across multiple security services: MDR, threat hunting, managed phishing, DLP, and more.

Every AI MDR provider that operates under a MASS architecture shares context, integrations, and expert knowledge across all those services rather than running them as separate products with separate data silos.

How Does MASS Handle the Hallucination Problem That Affects AI SOC Tools?

MASS approaches the hallucination problem differently from some AI SOC tools. In our view, hallucinations aren’t some mysterious failure mode. They’re usually the result of poor system design. When you dump massive amounts of raw telemetry into a general-purpose model and expect it to “figure out” what matters, you’re essentially forcing it to guess. That’s where hallucinations come from.

MASS is built to prevent that. Instead of relying on a single, undifferentiated model, we structure and index the data so the system knows what’s relevant before reasoning even begins. That grounding layer, paired with specialized AI components rather than a one-size-fits-all model, ensures the output is based on the right context, not probabilistic guesswork.

So rather than trying to fix hallucinations after the fact, MASS is designed to avoid creating the conditions that cause them in the first place.

How Does MASS Deliver Services Like Managed Phishing, DLP Investigation and Response Without Separate Tools?

Every service runs on the same foundation: deep integrations, an agentic platform, and security experts. Managed phishing uses the same context and agent orchestration as MDR, correlating signals across email, identity, endpoint, and cloud in one investigation.

The difference is that the trigger for investigations is email alerts and user-reported suspicious emails, a gap that most AI SOC tools cannot fill. As for DLP, the trigger is your incoming alerts, but we’ll perform a high-quality investigation on every alert and respond accordingly.

Do I Need to Replace My Existing Security Stack to Use MASS?

No. MASS integrates with your existing tools rather than replacing them. For example, Daylight connects to security tools, identity providers, HR systems, and collaboration platforms through bi-directional integrations.

New integrations are built in days, not months. Your current stack becomes the data source; MASS provides the investigation, context, and response layer on top.