What Is Alert Fatigue in Cybersecurity? Why More Visibility Doesn't Mean Less Work

.avif)

.avif)

Every Security Operations Center (SOC) team we talk to describes the same breaking point. It's not a single catastrophic miss. It's the morning you open your dashboard, see 200+ alerts from the weekend, and realize you're only going to look at the critical and high-severity ones.

Everything else gets closed or ignored. You know some of those alerts might matter. You just don't have the time, the context, or the energy to find out.

That's alert fatigue. Not a theoretical risk, but the daily operational reality where most alerts are benign, and the investigative work required to confirm that eats the entire shift.

Figuring out whether a single alert is real means checking identity in Okta, device posture in the EDR, recent tickets in Jira, and business context buried in Slack threads the SOC never sees. Multiply that by hundreds of alerts per day, and the math breaks down fast.

After a few months of this, analysts stop investigating and start pattern-matching. If it looks like the last fifty alerts that went nowhere, it gets closed. The problem is that attackers know this too.

TL;DR:

- Alert fatigue is the mental and operational exhaustion caused by too many low-value or false-positive alerts, leading analysts to miss real threats.

- More tools and telemetry increase alert volume without automatically improving signal quality. Visibility has outpaced human capacity to act on it.

- The root causes are structural: overlapping detections, missing business context, and understaffed SOCs running on static playbooks.

- Solving alert fatigue requires a shift from volume-based monitoring to context-driven investigation that reaches verdicts, not escalations.

What Is Alert Fatigue in Cybersecurity?

Alert fatigue is the cognitive and operational degradation that occurs when security analysts face a constant stream of alerts. Most are false positives, duplicates, or low-priority events that don't require action.

Over time, this relentless volume erodes judgment. Analysts begin treating every alert the same way: with decreasing attention and increasing skepticism.

Alert fatigue is the gap between security investment and security outcomes. Organizations spend millions on detection tools and outsourced monitoring, but when the people reviewing those detections can't distinguish real threats from noise, the entire stack underperforms.

Sophisticated threat actors know this. A well-timed intrusion buried in a sea of routine alerts has a higher chance of slipping through undetected.

Alert fatigue operates across three dimensions:

- Cognitive: Analysts become desensitized. They stop reading alert details closely and default to quick dismissal, developing a bias toward closing alerts rather than investigating them.

- Operational: Queues grow, SLAs breach, and triage becomes reactive whack-a-mole rather than methodical investigation. Backlogs accumulate faster than teams can clear them.

- Human: The repetitive, low-reward nature of the work drives burnout, disengagement, and attrition, compounding the very staffing shortages that made alert overload a problem in the first place.

The scale makes these dynamics worse. A typical enterprise SOC receives thousands of alerts per day from across its security stack, spanning SIEM correlation rules, EDR behavioral detections, cloud misconfigurations, identity anomalies, and email security flags.

A study by IBM and Morning Consult found that about 63% of the alerts that analysts manually review are false positives or benign events. But each one still requires someone to look at it, assess it, and decide whether to investigate or dismiss it.

When most alerts lead nowhere, analysts develop a rational but dangerous coping mechanism: they start assuming alerts are noise until proven otherwise. This is the "cry wolf" effect applied to security operations, and it's exactly the condition attackers exploit.

The definition matters, but the more pressing question is what's actually driving the problem. In most organizations, it starts with how they've instrumented their cloud environments and the gaps between the tools watching them.

Why More Visibility Creates More Work, Not More Security

Organizations invest in detection breadth without investing equally in the investigation and resolution layer that makes those detections actionable. The alerts pile up. The analyst team doesn't grow proportionally. And the result is a paradox: more security tooling actually creates more risk because the team can't process the signal effectively.

Every new tool added to the security stack generates its own alert stream, with its own severity schema, its own investigation workflow, and its own portal. Without integration, overlapping tools create duplicate work. Consider a single suspicious login event. That one event might fire:

- An alert in your identity provider

- A correlated alert in your SIEM

- A behavioral anomaly flag in your EDR

- A policy violation in your cloud security platform

That's four alerts for one event, each requiring separate review, each lacking the cross-tool context to resolve quickly. Research from Devo Technology found that 84% of organizations report that analysts unknowingly investigate the same incidents multiple times per month. Redundant investigation is a structural tax on every SOC that adds tools without integrating them.

The duplicate-alert problem is bad enough, but there's a deeper issue. These tools don't just generate overlapping signals. They also can't talk to each other in ways that would make those signals meaningful. There are two context gaps at work here, and they're often confused.

Organizational Context: Knowing Whether an Alert Matters in Your Environment

When a privileged account accesses a new resource at an unusual time, the detection system has no way to know whether that's a developer deploying a scheduled release, an executive traveling internationally, or a compromised credential moving laterally.

That answer lives in your identity provider, your HR system, your team calendars, and the unwritten policies that exist in someone's head or a Slack thread.

Without this business knowledge baked into the investigation, every alert gets the same treatment regardless of whether it actually matters.

Telemetry Context: Connecting Signals Across Tools During Investigation

A user logs into Okta from a commercial VPN. Separately, a new MFA device gets enrolled on their account five minutes later. Each alert has a plausible innocent explanation: maybe they're traveling and using hotel Wi-Fi, maybe they got a new phone.

But together, it's a textbook adversary-in-the-middle pattern. Without cross-system investigation, that connection never gets made. This is what makes telemetry context different from noise reduction.

Correlation across tools does more than collapse duplicates into a single investigation. It surfaces threats that no single alert would have caught on its own.

Without organizational context, analysts can't assess whether an alert matters. And without telemetry context, they can't connect it to anything else. Every alert ends up in the same undifferentiated queue, and the team triages on severity labels and gut instinct instead of evidence.

How Alert Fatigue Shows up Across the Security Stack

These context gaps hit hardest in cloud-native environments, where security data is distributed across systems that were never designed to share it. The result is that each category of security tool creates its own flavor of fatigue, and no single fix addresses all of them.

- SIEM platforms generate correlation overload. The same security event triggers alerts at multiple analysis stages, and out-of-the-box correlation rules fire on broad patterns that were never tuned for the specific environment. Analysts spend their time sorting through cascading alerts that all trace back to one event.

- EDR and XDR systems create behavioral detection noise. Baseline deviations trigger alerts for legitimate administrative activities. A single software deployment across a thousand endpoints can generate a thousand behavioral anomaly alerts, each technically accurate, each operationally useless.

- Identity security tools produce authentication overload. Every login anomaly, directory query, and permission change generates an alert. Analysts must distinguish legitimate IT operations from attack techniques that use identical protocols, requiring deep environmental knowledge that most triage workflows don't provide.

- Email security gateways add false positive decision fatigue. Analysts spend significant time making binary allow/block decisions on flagged messages rather than conducting actual security investigations. The volume is high, the stakes feel low on each individual decision, and the repetition grinds down attention.

The compounding effect makes alert fatigue so persistent. Fixing noise in one tool category barely dents the overall volume because three other categories are still flooding the queue.

But the tools themselves are only part of the story. The way SOCs are designed, staffed, and measured plays an equally significant role.

Root Causes of Alert Fatigue: Technology, Process, and People

Alert fatigue rarely has a single cause. It emerges from the interaction of three layers: the tools generating alerts, the processes (or lack of processes) governing how those alerts get handled, and the people expected to make sense of it all under pressure.

How Tool Sprawl Drives Alert Noise

The most common technology driver of alert fatigue is tool sprawl with no integration strategy. Organizations deploy dozens of security tools, many with overlapping detection logic. Default detection rules ship enabled out of the box, rarely tuned to the customer's specific environment. The result is a flood of generic alerts that don't reflect actual risk.

Poor alert hygiene makes this worse. Without deduplication, suppression, or aggregation, the same event can trigger multiple independent alerts across multiple platforms.

Analysts spend their time correlating what should have been correlated automatically, manually stitching together evidence from three or four different tools to understand a single event.

The cycle feeds itself. The more alerts a team has to process, the less time anyone has to audit and tune the detections generating them. Noisy rules persist because nobody has the bandwidth to review them, which keeps alert volume high, which keeps the team buried in triage.

It's a flywheel that spins faster the longer it goes unaddressed, and most organizations don't break it because detection tuning is the first thing that gets deprioritized when the queue is full.

Why SOC Processes Fail to Reduce Alert Noise

Many SOCs lack a coherent detection strategy. Rules exist because they shipped with a product, not because someone mapped them to specific attack techniques or business risks. There's rarely a formal process for analysts to flag noisy detections and get them tuned.

Without feedback loops between triage and detection engineering, the same false positives recur indefinitely.

Risk-based prioritization is also frequently missing. When severity levels are assigned generically, without weighting for asset criticality, user context, or business impact, everything ends up labeled "high." SOC teams lose the ability to distinguish between alerts that genuinely threaten the business and alerts that are technically accurate but operationally meaningless.

The Human Cost: SOC Analyst Burnout and Attrition

Understaffed SOCs that run 24/7 coverage with on-call rotations instead of dedicated shift teams create structural vulnerabilities. Analysts working nights and weekends often lack the seniority to make confident judgment calls about unfamiliar environments, so they default to the safest option: escalate everything.

This pushes the investigation burden back onto internal teams, the exact outcome that outsourced security was supposed to prevent.

The human toll is measurable. The Tines Voice of the SOC 2023 report found that 63% of SOC analysts experience burnout, with 55% saying they are likely to switch jobs within a year. The problem is compounded by understaffing: of those who felt their team was understaffed, 79% reported burnout, compared to 47% of those with adequate staffing.

When the people doing triage are constantly cycling out, every new hire starts from zero on environmental context, which feeds the escalation cycle all over again.

Meanwhile, the KPIs most SOCs track reward throughput over quality. "Alerts touched" and "tickets closed" incentivize speed, not accuracy. When the metric is how many alerts you processed rather than how many real threats you identified and contained, the system optimizes for noise management rather than threat resolution.

These root causes don't stay contained within the SOC. They ripple outward into security outcomes, workforce stability, and boardroom conversations about ROI.

The Business Impact of Alert Fatigue on Security Teams

The real danger of alert fatigue isn't operational cost. It's breach risk, and it's the kind of risk that's almost impossible to quantify until something goes wrong.

When analysts are desensitized and backlogs are growing, teams default to reviewing only high and critical-severity alerts. Everything below that threshold gets closed or ignored. That means a well-crafted attack that doesn't trigger a critical-severity rule has a better chance of slipping through undetected.

Attacker dwell times grow, not because detection tools failed, but because the team investigating those detections ran out of capacity to look at them.

That's what makes alert fatigue so dangerous to measure. The alerts that matter most are often the ones that never got investigated, and you can't calculate the cost of an incident you didn't know you missed.

Security leaders who try to frame the impact through operational metrics alone are measuring the wrong thing. The real impact is the growing probability that something is already in your environment and nobody has had the time to find it.

The downstream effects are real but secondary:

- Experienced analysts doing repetitive triage work burn out and leave, compounding the staffing shortages that created the overload in the first place

- Compliance gaps emerge when investigation backlogs prevent timely documentation

- Leadership gradually loses confidence in SOC metrics because the data reflects activity volume, not actual protection

And when the CFO asks what the organization is getting for its security investment, the honest answer is often a team that spends most of its shift confirming that alerts are benign.

But it's worth being precise about causality here. Alert fatigue is the symptom. The underlying cause is alert volume that exceeds the team's capacity to investigate, whether because of tool sprawl, missing context, understaffing, or all three.

The downstream consequences listed above flow from that resource imbalance, not from "fatigue" as an abstract concept. Understanding the distinction matters because it changes where you focus the fix.

The good news is that the problem is solvable. But solving it requires working across detection strategy, context architecture, automation, and measurement simultaneously.

How to Reduce Alert Fatigue in Your SOC

Alert fatigue won't respond to a single fix. Reducing it requires coordinated changes across four areas: detection hygiene, context enrichment, automation strategy, and how you measure success.

1. Fix Detection Hygiene First

Start with the alerts you already have. The highest-impact first moves are:

- Audit your top 20 noisiest detection rules, the ones generating the highest volume with the lowest true-positive rate. Disable, tune, or consolidate them.

- Map remaining detections to MITRE ATT&CK techniques so every rule has a clear purpose and an owner responsible for its performance.

- Implement time-window correlation and asset-level aggregation to collapse related alerts into single investigation threads.

Detection hygiene reduces the noise floor, but it only addresses alerts that shouldn't have fired in the first place. The harder problem is the alerts that fire correctly but still can't be resolved without more information.

2. Add Context Before Adding Coverage

Context is one of the most effective levers for reducing alert fatigue. When an alert arrives enriched with identity information, device posture, and business context, the triage decision becomes faster and more accurate.

The analyst already knows who the user is, what their role is, whether their device is managed, and whether this behavior is expected for their function. That's the difference between a 20-minute investigation and a confident call in seconds.

3. Rethink SOC Automation Beyond Playbooks

Traditional SOAR playbooks automate static, predictable workflows. That helps with known scenarios, but most alert fatigue comes from the ambiguous middle ground. Alerts that aren't clearly malicious and aren't clearly benign.

Agentic AI systems handle this differently. Rather than following a fixed decision tree, they pull context from multiple data sources, correlate evidence across tools, and reach investigation conclusions dynamically. The key distinction is that automation executes playbooks, while agentic investigation actually thinks through the evidence.

4. Measure SOC Quality, Not Alert Volume

Shift SOC metrics from throughput to outcomes. The metrics that actually drive improvement include:

- True-positive rate per detection rule, which creates accountability for detection quality at the source.

- Percentage of alerts resolved without human intervention, which measures how well automation and context are working together.

- Time-to-contain for confirmed incidents, which tracks what matters: how fast real threats get stopped.

- Alert-to-incident ratio, which directly quantifies the signal-to-noise problem.

Establish weekly detection review sessions where analysts flag noisy rules and detection engineers tune them. This feedback loop is what turns a reactive SOC into an improving one.

Even the best detection hygiene and metrics won't solve alert fatigue if the underlying operating model still depends on human analysts triaging every alert manually. The final shift is structural.

Moving from Alert Management to Threat Resolution

Alert fatigue is ultimately a symptom of a deeper structural problem: security operations built around monitoring and escalation rather than investigation and resolution. Tighter detection logic and better automation both help, and most organizations don't invest nearly enough in either.

But the most effective path we've seen is going further and using AI to automate the full investigation cycle from alert to verdict, rather than accelerating individual steps within the old workflow.

That shift has three requirements:

- Build the context foundation: Collect all three types of context that investigations depend on: telemetry from your security tools, organizational knowledge from identity systems, HR platforms, device management, and collaboration tools like GitHub and Notion, and historical patterns from past investigations. Without all three, any automation layer is working with incomplete information.

- Invest in agentic automation: Move beyond deterministic SOAR workflows that only handle predictable scenarios. Agentic investigation engines can pull the right context at the right time, correlate across systems, and reach high-confidence verdicts with minimal human involvement.

- Close the loop at the source: Build bi-directional integration between your investigation platform and your security tools so that when an alert is resolved as benign, it gets closed in the source tool automatically. This is the piece most organizations miss, and it's the most important one. Faster investigations help, but alert fatigue persists as long as benign alerts keep piling up in your queue. The only way to break the cycle is zero alert backlogs.

Every security team running modern tooling deals with some degree of alert fatigue. The difference is whether you're managing the volume or eliminating it. The three requirements above work together because they address the structural cause, not just the symptoms that sit on top of it.

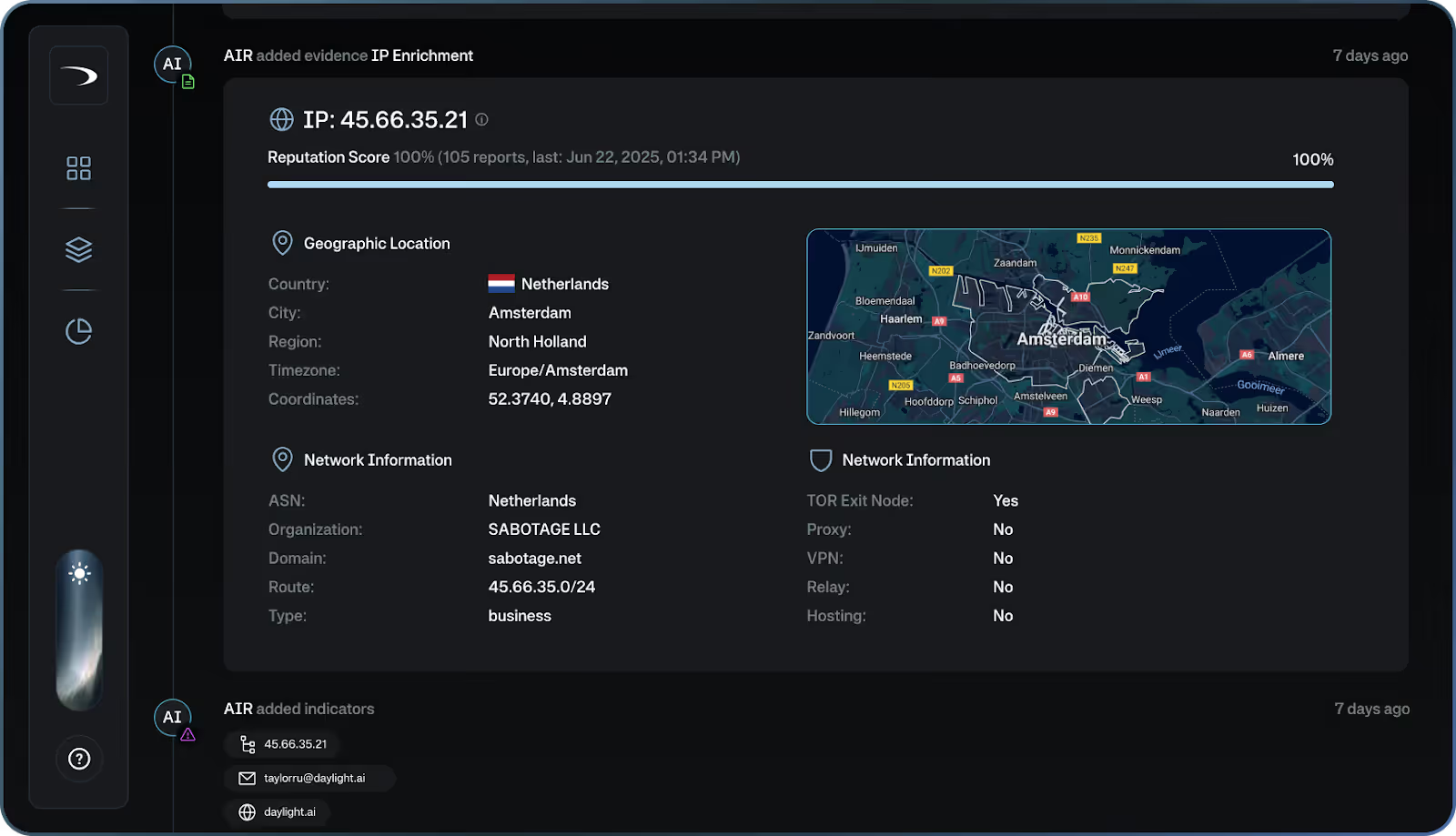

Daylight Security was built around all three of these requirements. Daylight is an agentic platform that builds telemetry, organizational, and historical context for each customer environment by integrating deeply across security tools, identity systems, HR platforms, device management, calendars, and collaboration tools like GitHub and Notion.

Its investigation engine, AIR (Agentic Investigation and Response), pulls the right context at the right time to conduct cross-system investigations that reach accurate, high-confidence verdicts.

When AIR resolves an alert as benign, it closes the alert in the source tool automatically. This bi-directional integration is what makes zero alert backlogs possible. Alerts don't just get triaged. They get resolved and removed from the queue, so the backlog that drives alert fatigue never accumulates.

Security experts with incident response and threat hunting backgrounds feed environmental knowledge back into the platform, improve detection rules based on what they observe, and lead response during confirmed incidents. Their role is to make the platform smarter over time and take command when a real incident requires human judgment.

The result is a security operations model where teams move from constant firefighting to strategic work like detection engineering, architecture hardening, and proactive threat hunting. Every investigation is fully transparent, and coverage improves continuously as the platform learns your environment.

Ready to escape the dark? Book a demo to see how Daylight brings full context, agentic investigation, and zero alert backlogs to your SOC.

Frequently Asked Questions About Alert Aatigue in Cybersecurity

What Causes Alert Fatigue in Cybersecurity?

Alert fatigue is caused by the combination of high alert volume, high false-positive rates, overlapping detections from multiple security tools, and insufficient business context to help analysts make fast triage decisions.

Can AI Solve Alert Fatigue?

AI can significantly reduce alert fatigue, but only when it has access to the right context. AI systems that simply automate existing playbooks without understanding business context, user identity, and asset criticality just automate the same noise faster.

Agentic AI systems that can pull context from identity providers, CMDBs, HR systems, and historical investigations to reach definitive verdicts are far more effective because they're actually investigating, not just triaging.

How Does Alert Fatigue Affect Security Outcomes?

Alert fatigue increases attacker dwell time because real threats get buried in noise. It drives analyst burnout and attrition, which worsens staffing shortages. It creates compliance gaps when investigation backlogs prevent timely documentation.

And it erodes leadership confidence in security metrics, making it harder to justify security investment.

Is Adding More Security Tools Making Alert Fatigue Worse?

In many cases, yes. Each new security tool generates its own alert stream, often with overlapping detection logic that fires on the same events as existing tools. Without a strategy for deduplication, correlation, and context enrichment, more tools directly translate to more noise.

The answer is not fewer tools, but an investigation layer that can synthesize signals from across the stack and reach conclusions rather than surfacing more alerts.